Logging PHP Arrays

Published: November 30, 2016

How should I log a PHP array?

If you work as a PHP developer this is probably a question you’ve asked yourself before. There are quite a few guides you’ll find online in regards to this subject.

- How to print array contents in log file

- Using

error_logwithprint_rto gracefully debug PHP - Logging an Array in Laravel

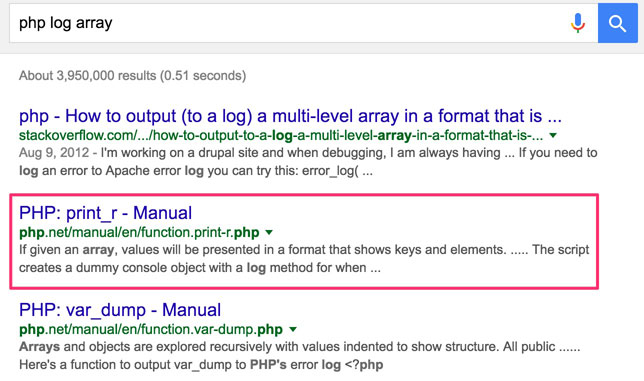

Typically, they point to PHP’s print_r function.

Unfortunately, they’re wrong ![]()

PSA: print_r() for logs sucks...

— Max Chadwick (@maxpchadwick) November 30, 2016

So why, exactly, does print_r suck for logging? Allow me to elaborate.

print_r’s Stated Purpose

Here’s the description of the print_r function pulled from the PHP documentation.

Prints human-readable information about a variable

Sounds like what we’re looking for when logging an array, right?

Wrong. The key problem is that print_r is intended to display an array in a human-readable format. And that, my friends, is where the trouble starts.

Who Actually Reads Log Files?

Contrary to popular belief, raw log files are not typically read by humans. Rather, a machine is generally used to process raw log files to assist in answering questions asked by humans.

Here’s an example.

Client: We are getting reports from good customers that payments are being rejected.

This is a real world example that I was literally just asked to investigate today. In this case, I was working with the Magento platform, which, when put into “debug” mode, logs the requests and responses it makes to the payment gateway using print_r ![]()

The log files look something like…

2016-11-30T20:45:59+00:00 DEBUG (7): Array

(

[request] => Array

(

[amt] => 46.13

[acct] => ****

[expdate] => ****

[cvv2] => ****

[currency] => USD

[firstname] => Bob

[lastname] => Smith

[street] => 123 Main St

[city] => New York

[state] => NY

[zip] => 12345

[country] => US

[email] => [email protected]

)

[result] => Array

(

[result] => 125

[respmsg] => Declined by Fraud Service

[authcode] => 111111

[avsaddr] => N

[avszip] => N

[cvv2match] => Y

[procavs] => N

[proccvv2] => M

[iavs] => N

[prefpsmsg] => No Rules Triggered

[postfpsmsg] => Reject AVS

[result_code] => 125

)

)

Here are the questions I’d hope this log file can help me answer…

- Is there really an increase in orders being rejected?

- If so, how bad?

- Are there any patterns that might indicate why this is happening?

The fact that the logs are human-readable is nice if I just want to review one case. But reviewing trends over days, weeks, or months, is not something I would want to manually do.

How To Analyze A Log File With A Machine

My top commands when looking at log files are the following

The thing is, usage of this tool set is much easier if each entry in the log file is on one line (the way that Apache and Nginx do logging, for example). Here’s a command I might use to look for an increase in 503 errors over a period of time.

$ sudo tail -n 99999 /var/log/httpd/access.log \

| grep ' 503 ' \

| awk '{ print $4 }' \

| awk -F":" '{ print $1 }' \

| sort \

| uniq -c

That might print something like this…

3 [27/Nov/2016

5 [28/Nov/2016

11 [29/Nov/2016

179 [30/Nov/2016

179 503s on Nov 30th whereas there were less than 20 the past few days? Houston we have a problem.

With the payment debug logs I’d really just like to grep for “Declined by Fraud Service”, but unfortunately, I can’t easily extract any other details about these results (e.g. date) because they’re on a separate lines. grep offers -A and -B flags to grab some surrounding context, but print_r results are typically variable height so those flags don’t really help. Believe me, I’ve been down this path before.

Typically, if I want to work with these log files I wind up having to abandon pure bash and writing a little script in PHP to process the files.

json_encode To The Rescue!

Fortunately, in PHP we can output an array to a log file all on a single line using json_encode.

Now that same file will now look like this…

2016-11-30T20:45:59+00:00 {"request":{"amt":"46.13","acct":"****","expdate":"****","cvv2":"****","currency":"USD","firstname":"Bob","lastname":"Smith","street":"123 Main St","city":"New York","state":"NY","zip":"12345","country":"US","email":"[email protected]"},"result":{"result":"125","respmsg":"Declined by Fraud Service","authcode":"111111","avsaddr":"N","avszip":"N","cvv2match":"Y","procavs":"N","proccvv2":"M","iavs":"N","prefpsmsg":"No Rules Triggered","postfpsmsg":"Reject AVS","result_code":"125"}}

Now I can grep and awk and get the answers I want easily.

$ grep 'Declined' payment_file.log \

| awk '{ print $1 }' \

| awk -F"T" '{ print $1 }' \

| sort \

| uniq -c

Outside of PHP-world, no one knows about print_r format

Another problem with print_r is that it’s just a format that PHP made up to make arrays readable. This means that other tools that are language agnostic almost certainly don’t know about print_r format. A perfect example of this is Logstash, which features a json filter for processing log files (so that they can, e.g. be shipped to Elasticsearch). print_r format, needless to say, is not supported.

Conclusion

The bottom line is, print_r really isn’t a good choice for logging arrays. I hope that this article helped show you why. If you have any comments, feel free to drop a note comments below. Of course, as always, you can reach me on Twitter as well.

Hi, I'm Max!

Hi, I'm Max!